Computer components are not usually expected to transform entire businesses and industries, but a graphics processing unit Nvidia released in 2023 has done just that.

The H100 data centre chip has added more than US$1-trillion to Nvidia’s value and turned the company into an AI kingmaker overnight. It’s shown investors that the buzz around generative artificial intelligence is translating into real revenue, at least for Nvidia and its most essential suppliers.

Demand for the H100 is so great that some customers are having to wait as long as six months to receive it.

1. What is the Nvidia H100 chip?

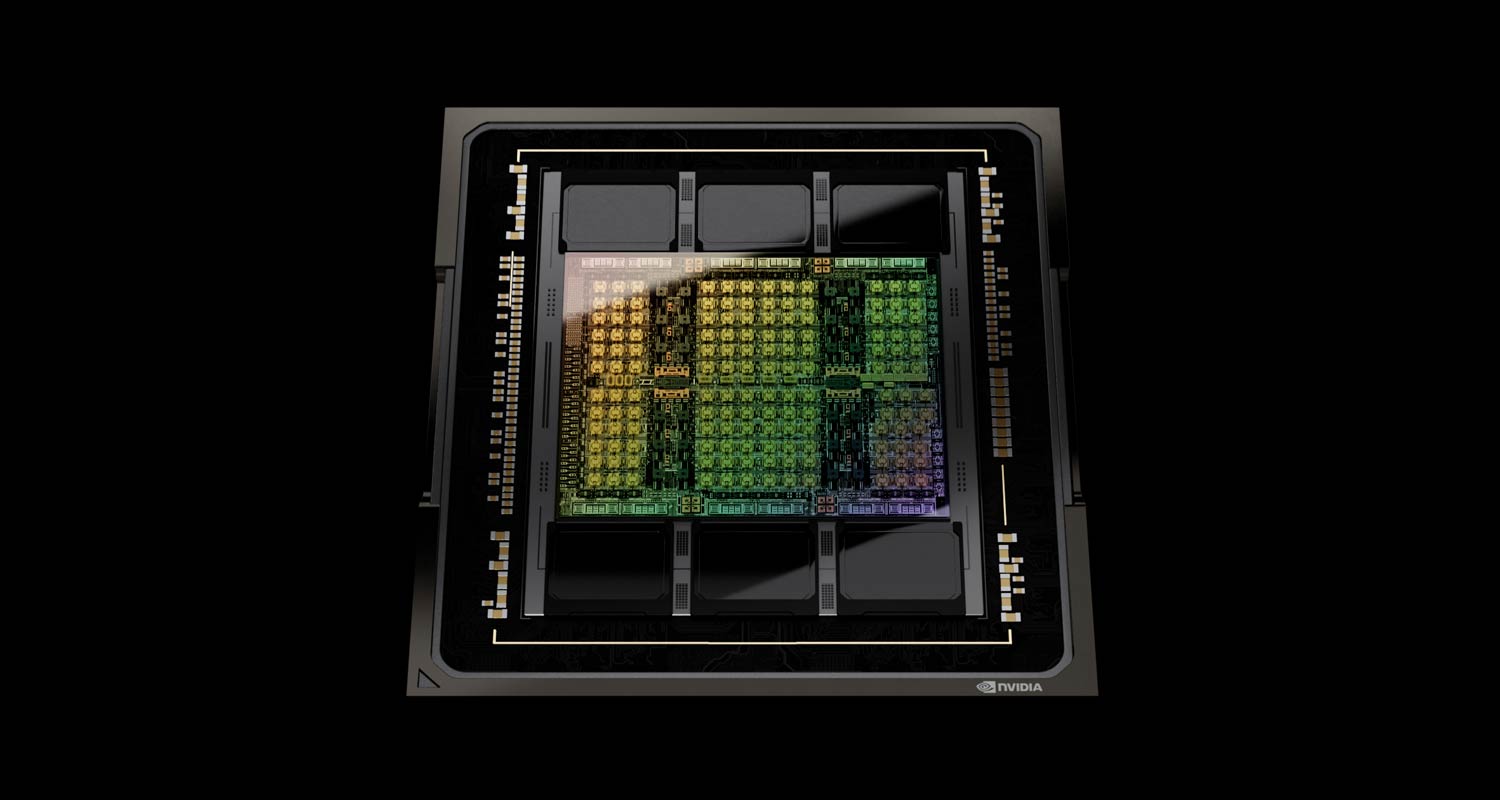

The H100, whose name is a nod to computer science pioneer Grace Hopper, is a graphics processor. It’s a beefier version of a type of chip that normally lives in PCs and helps gamers get the most realistic visual experience. But it’s been optimised to process vast volumes of data and computation at high speeds, making it a perfect fit for the power-intensive task of training AI models. Nvidia, founded in 1993, pioneered this market with investments dating back almost two decades, when it bet that the ability to do work in parallel would one day make its chips valuable in applications outside of gaming.

2. Why is the H100 so special?

Generative AI platforms learn to complete tasks such as translating text, summarising reports and synthesising images by training on huge tomes of preexisting material. The more they see, the better they become at things like recognising human speech or writing job cover letters. They develop through trial and error, making billions of attempts to achieve proficiency and sucking up huge amounts of computing power in the process. Nvidia says the H100 is four times faster than the chip’s predecessor, the A100, at training these so-called large language models, or LLMs, and is 30 times faster replying to user prompts. For companies racing to train LLMs to perform new tasks, that performance edge can be critical.

3. How did Nvidia become a leader in AI?

The Santa Clara, California company is the world leader in graphics chips, the bits of a computer that generate the images you see on the screen. The most powerful of those are built with hundreds of processing cores that perform multiple simultaneous threads of computation, modelling complex physics like shadows and reflections. Nvidia’s engineers realised in the early 2000s that they could retool graphics accelerators for other applications, by dividing tasks up into smaller lumps and then working on them at the same time. Just over a decade ago, AI researchers discovered that their work could finally be made practical by using this type of chip.

4. Does Nvidia have any real competitors?

Nvidia controls about 80% of the market for accelerators in the AI data centres operated by Amazon.com’s AWS, Alphabet’s Google Cloud and Microsoft’s Azure. Those companies’ in-house efforts to build their own chips, and rival products from chip makers such as AMD and Intel, haven’t made much of an impression on the AI accelerator market so far.

5. How does Nvidia stay ahead of its competitors?

Nvidia has rapidly updated its offerings, including software to support the hardware, at a pace that no other firm has yet been able to match. The company has also devised various cluster systems that help its customers buy H100s in bulk and deploy them quickly. Chips like Intel’s Xeon processors are capable of more complex data crunching, but they have fewer cores and are much slower at working through the mountains of information typically used to train AI software. Nvidia’s data centre division posted an 81% increase in revenue to $22-billion in the final quarter of 2023.

6. How do AMD and Intel compare to Nvidia?

AMD, the second largest maker of computer graphics chips, unveiled a version of its Instinct line in June aimed at the market that Nvidia’s products dominate. The chip, called MI300X, has more memory to handle workloads for generative AI, AMD CEO Lisa Su told the audience at an event in San Francisco. “We are still very, very early in the life cycle of AI,” she said in December. Intel is bringing specific chips for AI workloads to the market but acknowledged that, for now, demand for data centre graphics chips is growing faster than for the processor units that were traditionally its strength. Nvidia’s advantage isn’t just in the performance of its hardware. The company invented something called Cuda, a language for its graphics chips that allows them to be programmed for the type of work that underpins AI programs.

7. What is Nvidia planning on releasing next?

Later this year, the H100 will pass the torch to a successor, the H200, before Nvidia makes more substantial changes to the design with a B100 model further down the road. CEO Jensen Huang has acted as an ambassador for the technology and sought to get governments, as well as private enterprise, to buy early or risk being left behind by those who embrace AI. Nvidia also knows that once customers choose its technology for their generative AI projects, it’ll have a much easier time selling them upgrades than competitors hoping to draw users away. — (c) 2024 Bloomberg LP